Why You Need to A/B Test Your Email Outreach

When your emails don’t generate the results you expect, there are a couple of fixes that can help every email sequence.

Then, there are some recommendations that apply only to some use cases.

But there are also things to optimize that you need to figure out on your own to create that perfect email outreach sequence.- Will a question in the subject line work better?

- Will you get a higher reply rate from contacting CEOs or CTOs?

- Will adding an image to your email body help your reply rate, or will it have a negative effect?

Nobody can answer these questions for you because they are case-specific: nobody is sending emails with your offer under your company name.

But how do you test all the various aspects of your email without burning through your contacts?

What is A/B testing in email outreach

A/B testing is a simple concept: you test two (or more) variants of a variable against each other to figure out which yields the best results.

For example, you can create two CTA (call-to-action) variants to see which gets you a higher reply rate.

Then you run an A/B test by splitting your outreach campaign audience in half: one half gets the A variant, and the other half gets the B variant.

Whichever variant generates the higher reply rate wins, making it a better CTA.

Why A/B testing works great with outbound lead generation

Email outreach lends itself particularly well to A/B testing, and here are two reasons why:

- A/B testing emails is practically free.

When A/B testing website copy changes, you need a specialized tool to launch the test, and it may take a long time before you see statistically significant results.

Testing ad variants can also take forever, and managing multiple ad variants is a chore.

But with outbound emails. A/B testing doesn’t cost anything, and as long as your email accounts are warmed up, you can quickly identify winning variants and immediately implement them.

- Your findings compound over time.

Anything you find out in your A/B tests, you should apply to other campaigns.

Unless you make significant changes to your outreach (e.g., targeting an entirely different market segment), winning variants should have a compounding positive effect over months or years as you continue to engage your target market.

Email A/B tests best practices

Rule #1: Only test one variable at a time.

This rule means the only thing that should differ between variants is your independent variable—the thing you’re testing.

That is why you can’t send one sequence on Monday, then send another on Tuesday with a different CTA, and call it an A/B test on your CTAs. Maybe the thing that impacts your reply rates won’t be the CTA, but that you sent your emails on two different days?

These things are difficult to control, but an automated sequencer like Hunter Sequences can help you overcome this challenge.

Rule #2: Aim for statistical significance

Statistical significance means the results of your test aren’t a fluke.

Whether or not a test will achieve significance depends on:

- how many emails you send, and

- how strong the effect (the difference in the results between variants) is.

For example, you can send an A/B test with 1000 emails per variant. If variant A receives 10 replies and variant B receives 11, the effect isn’t strong enough to be statistically significant.

At the same time, you can send a test with 50 emails each and even if one of the variants receives 5 more replies than the other, it won’t be statistically significant due to low volume.

The math behind statistical significance is complex but you don’t need to understand it: simply use a statistical significance calculator before planning your test to know the volume you should be aiming for.

Rule #3: Always look at reply rates, even when optimizing for a higher open rate.

In email outreach, positive replies should be your north star, so whenever testing anything, look at that metric to move it in the positive direction.

With tests that touch on your email subject line, you may also choose to temporarily enable open tracking to see how the open rate changes by subject line. But an improvement in open rates doesn’t always result in higher reply rates.

Rule #4: Give it time

While email outreach typically gets most replies within a day or two of sending, some recipients take their sweet time.

That’s why you should give it at least a week before deciding on the winning variant of your A/B test.

Outreach aspects you should A/B test

A/B testing isn’t just a mechanism to pick winning copy; you can test nearly every aspect of the email outreach process to land on a sequence that turns leads into relationships.

Let’s look at them, ordered by priority, so you can start where it matters most.

Pick the right audience

Nothing is more important for getting positive replies than targeting the right organizations. But targeting isn’t always easy to figure out.

It makes sense to focus on a market that converts best, so consider testing the same offer with different audiences, e.g.,

- Companies headquartered in different countries

- Different industries/verticals

You can easily run these tests with Hunter Discover. Simply search for two different verticals, then create separate lead lists for these audiences, and plug them into duplicated sequences.

Figure out which role you’re resonating with the most

At Hunter, we send Sequences targeting companies in adjacent industries to promote Hunter on their websites.

But since multiple people are usually involved in maintaining website content, we’ve run some tests to determine which roles we should prioritize. And it turned out that targeting SEOs or similar roles worked much better than sending our emails to content marketing roles.

For you, this may be a distinction between contacting CEOs or CTOs, team leads vs C-level executives, and similar.

Test different value propositions

There’s always more than one way to talk about your business.

The simplest distinction is between alleviating your customers’ pains and providing them with gains. For example:

- Hunter Sequences help you scale email outreach with seamless automation

- Hunter Sequences protects your emails from landing in spam by sending them with deliverability in mind

While you may have a preference for one or the other, don’t assume your recipients share that preference—test your value proposition instead.

Pick the best subject line

The subject line is a key element of your sequence—especially if you’re sending your follow-up emails in the same thread (which is a best practice).

But how to know which subject line will get people to open your messages?

One way is to track open rates. However, data shows that email tracking is associated with poor deliverability.

That’s where A/B testing comes in handy.

When testing subject lines, consider the following:

- If you structure your subject line as a question vs a statement, will it perform better?

- How long should it be? Will 1-2 words that spark curiosity outperform a longer line that shows some of the value in the message?

- How personalized should it be? On average, subject lines with two custom attributes (e.g., your recipient’s name and their company name) perform best—will that be true for your audience too?

Test different CTAs

Finding the optimal CTA is all about figuring out the amount of effort your recipients are willing to agree to based on your offer and the value you provide in your email sequence.

Our surveys show that decision makers prefer soft CTAs like “Open to learning more?” (preferred by 24% of respondents) or “Can I send more info?” (36%)

Is that the case for your sequence? Perhaps not. We’ve also seen very successful campaigns that directly ask for a meeting.

Try adding images or videos to your emails

Analyzing our internal data, we don’t see what some other email outreach experts report: namely, that using images or videos in your emails hasn't negatively affected your ability to land in the primary inbox.

Can an image tell the part of the story that you don’t want to squeeze into your email?

A/B test different types and levels of personalization

67% of decision makers say that messages referencing information about them online are more likely to receive a reply.

This should encourage you to personalize your messages. But what type of personalization will resonate?

Our recommendation is to keep personalization related to your offer; where your recipient went to school has little bearing on whether or not they struggle with the problem you can solve for them.

But that still doesn’t point to a specific type of personalization that will make you successful, and no data analysis will. Think of the data points you can use to prove you understand your recipients’ circumstances, and test them all.

Test different email lengths

Finally, you can settle this age-old debate for yourself: how long should your email be?

Our research shows that emails of all shapes and sizes can be successful.

The question really is, how much information do you need to convey in your email to maximize positive replies? Too little information may get some people curious and fly over others’ heads; if your email is too long, though, you’ll lose some people midway through the message.

Dissect your email drafts: prioritize all pieces of information and test them in various combinations.

How to A/B test email outreach with Hunter

Hunter Sequences offers a simple way to launch and manage A/B tests within your email campaigns.

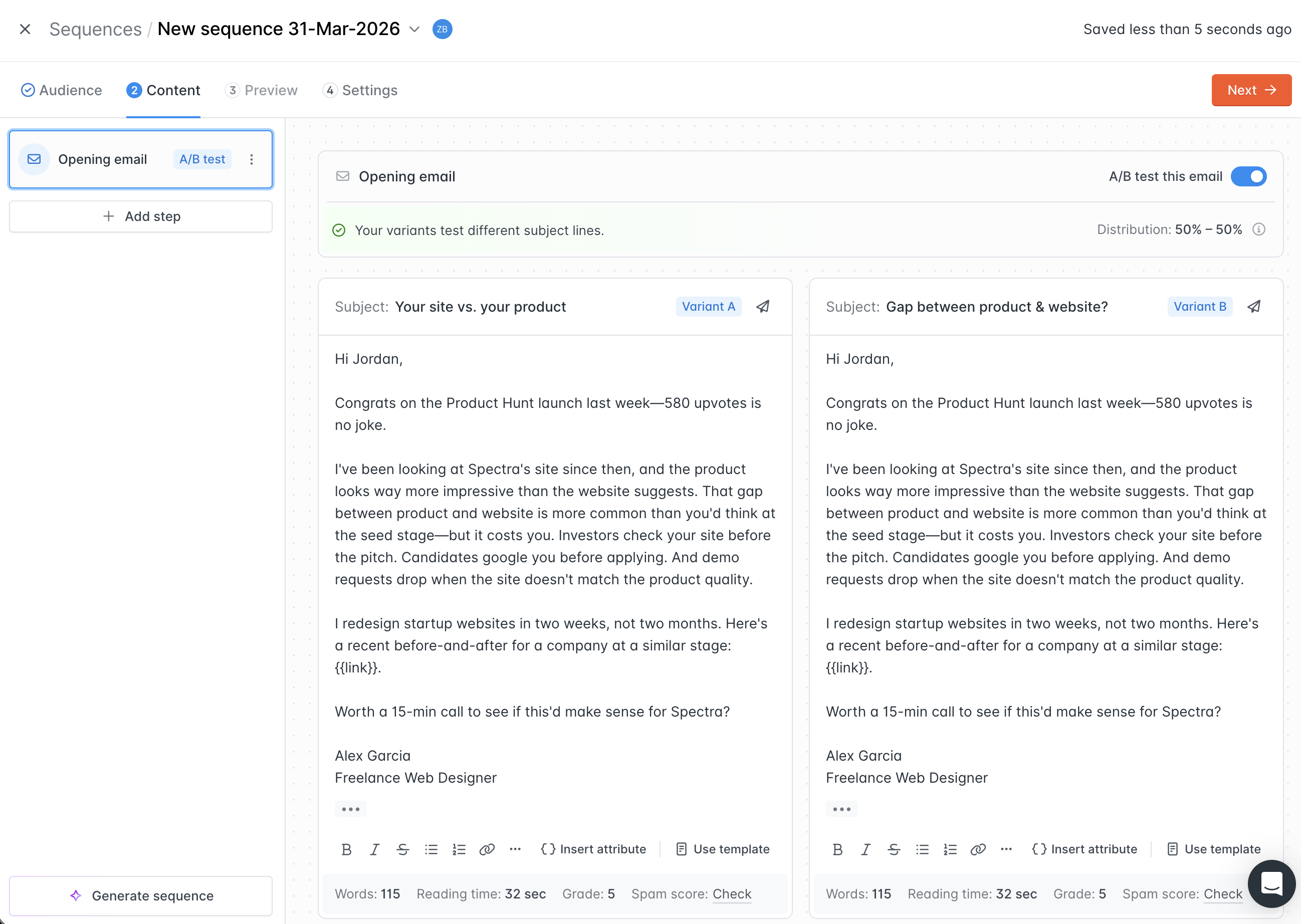

When composing your sequence, you simply toggle "A/B test this email" and prepare two variants of your message.

While it's possible to test multiple elements at the same time, we recommend focusing on one pronounced change to make the results easier to interpret.

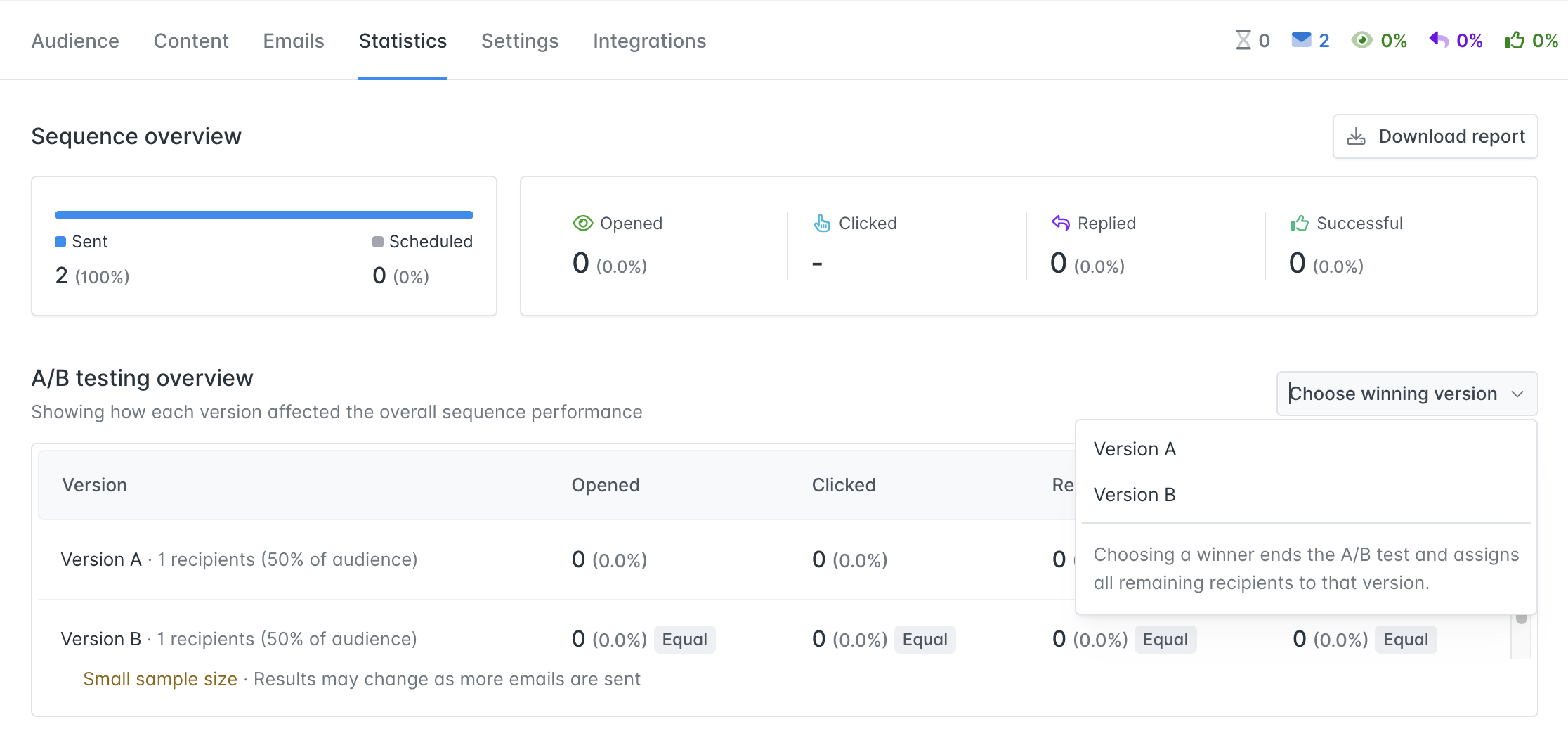

After your test runs, you can pick a winning variant in the Statistics tab for that sequence, and the remaining emails in the sequence will use that variant.

In the background, Hunter ensures that your tests are accurate by randomly assigning variants to your prospects, optimizing sending times, and checking if the results are statistically significant before you make your call.

The A/B testing feature is available on paid plans.

Send cold emails with Hunter

Send cold emails with Hunter